Table of Contents

Half of Americans want social media companies to remove ‘misinformation’ and ‘hate’

Shutterstock.com

Elon Musk’s purchase of Twitter triggered the resurgence of a years-long public debate over content moderation on social media. At its core, the debate focuses on whether social media companies have an obligation to remove — or at least flag — posts that contain “hate speech” or “misinformation.” And polling reveals that Americans’ belief in free speech and expression is tenuous at best.

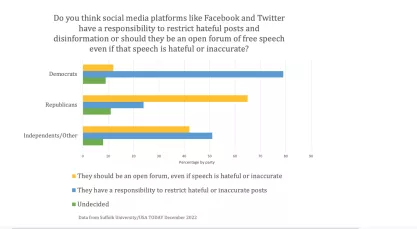

A December survey by Suffolk University and USA TODAY asked 1,000 people whether they think “social media platforms like Facebook and Twitter have a responsibility to restrict hateful posts and disinformation,” or whether these platforms “should be an open forum of free speech even if that speech is hateful or inaccurate.” More than half of respondents (51.7%) supported speech restrictions, while 38.5% supported an open forum without speech restrictions.

These results are concerning, especially because “hate” and “misinformation” lack clear definitions.

The survey demonstrated a clear partisan divide: 79% of Democrats supported speech restrictions, compared to only 24% of Republicans. Independents split at 51%.

These results are concerning, especially because “hate” and “misinformation” lack clear definitions. As FIRE senior fellow Nadine Strossen writes, “Many people have hurled the epithet ‘hate speech’ against a diverse range of messages that they reject, including messages about many important public policy issues.”

The term “misinformation,” too, is vague. As Supreme Court Justice Samuel Alito writes in his dissent of United States v. Alvarez:

There are broad areas in which any attempt by the state to penalize purportedly false speech would present a grave and unacceptable danger of suppressing truthful speech… The point is not that there is no such thing as truth or falsity… or that the truth is always impossible to ascertain, but rather that it is perilous to permit the state to be the arbiter of truth… Today’s accepted wisdom sometimes turns out to be mistaken.

Previous polling also shows an appetite for strict content moderation by the government or by the social media platforms.

In the weeks prior to this year’s midterm elections, the Knight Foundation and Ipsos took a closer look at public perception of election misinformation on social media. Their survey found that 76% of people agree that “false information” poses a serious problem. As a remedy, almost half (49%) of all those surveyed believe social media companies should “more aggressively remov[e] content that violates their standards” on misinformation.

Thirty-three percent of respondents favor government regulation of misinformation on social media, while 39% oppose it. Meanwhile, 27% had no opinion on government regulation.

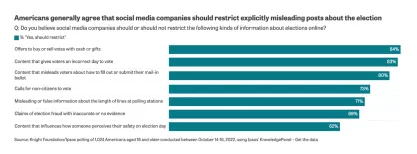

The survey also asked participants whether social media companies should restrict posts about specific topics relating to election misinformation. For instance, most respondents believe social media companies should restrict posts that offer to “ buy or sell votes” (84%), that give “voters an incorrect day to vote” (83%), or that mislead “voters about how to fill out or submit their mail-in ballot” (79%). Moreover, 69% of respondents want social media companies to restrict “claims of election fraud with inaccurate or no evidence.”

Respondents also appear more concerned that others will fall victim to election misinformation than they will: 61% fear that members of their community will decide how to vote based on false or misleading information, while only 25% fear that they will do so themselves. This data supports the third-person effect –– the tendency for people to believe that media has a “greater effect on others than on themselves.” Some experts argue the third-person effect drives attitudes toward censorship.

The Knight Foundation and Ipsos also conducted a survey in the summer of 2021 to gauge sentiments about hate speech on social media. That survey shows that 70% of respondents believe hate speech is a serious problem on social media. Like the respondents to the survey on misinformation, half of respondents favored social media companies “more aggressively removing content that violates their standards.”

Government attempts to label speech misinformation, disinformation, and malinformation are a free-speech nightmare

Allowing the government to decide what speech is and is not fit for public consideration will likely make the problem worse.

An October survey by YouGov found much stronger support for restricting hate speech. When asked directly whether “social media companies should … suspend a user’s account for posting hate speech,” about three-in-four respondents (73%) answered affirmatively.

Given that the December poll by Suffolk and USA TODAY found that only 52% of Americans favor social media companies restricting hateful posts, the much larger percentage from the YouGov survey perhaps results from a different sample, or perhaps from the specific questions asked of respondents.

The Suffolk/USA TODAY poll asked a question that forced respondents to weigh two different values and ultimately choose one. Specifically, it asked if social media platforms “have a responsibility to restrict hateful or inaccurate posts,” or if they “should be an open forum, even if speech is hateful or inaccurate.” The survey therefore directly pits free speech against mitigating hate — a misleading binary, because hate speech on social media is arguably better mitigated by counterspeech than censorship.

Meanwhile, the YouGov survey never mentions free speech. It merely asks whether respondents think social media platforms should “suspend a user’s account for posting hate speech.”

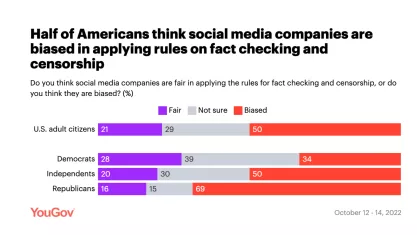

Finally, Americans remain divided about whether they think social media companies are fair or biased in applying rules on fact checking and censorship in general. Overall, half of respondents felt that social media companies are biased in their content moderation, while only 21% felt they are fair. Republicans (69%) were much more likely than Democrats (34%) to say that social media companies are biased.

Because “hate” and “misinformation” are such subjective terms, social media platforms might remove content that some users believe to be legitimate public discourse. In turn, that a large number of respondents see social media as biased makes sense.

Before calling on social media companies to censor “hate” and “misinformation,” then, the public must take pause and remember that these concepts are fuzzier than they might seem.

In the digital age, certain free speech concepts can get a little confusing. What is misinformation vs. disinformation? Is one worse than the other? Why is everyone talking about Section 230, social media and free speech laws? Why does Congress want to ban TikTok? Learn about this and more in FIRE’s extensive library of First Amendment resources.

Recent Articles

Get the latest free speech news and analysis from FIRE.

TICKETS ON SALE: Step up to the Soapbox in Philadelphia, Nov. 4-6, 2026

LAWSUIT: FIRE sues Federal Trade Commission over agency’s targeting of news rating service

The secret war against student journalists