Table of Contents

FIRE Report on Social Media 2024

Executive Summary

With as many as 5.17 billion accounts worldwide, social media is perhaps the most powerful tool in the history of mankind for average citizens to express themselves and be heard.

Given freedom of expression’s central role in democracy, and social media’s immense popularity as a venue for public discussion, freedom of speech on social media is of critical concern.

As the First Amendment’s prohibition on censorship makes clear, the government itself poses the greatest threat to free speech rights. This is no different with regard to social media. Private social media platforms have a First Amendment right to determine what content to allow on their platforms, but this has not stopped some states from trying to impose unconstitutional curbs on this right, forcing platforms to carry content against their will. Other states have enacted laws imposing age restrictions on access to social media platforms. Meanwhile, members of Congress, the White House, and federal agency employees publicly threaten platforms with reform or repeal of the Section 230 protections that have helped free speech flourish on the internet.

Even more worryingly, leaks have shown extensive demands, threats, and jawboning from government officials to employees of private social media companies behind closed doors, away from public scrutiny.

While the government is and will likely always be the principal threat to free speech online, platforms could do more to foster a culture of free speech. Social media executives Elon Musk and Mark Zuckerberg frequently talk about the importance of free expression principles as core to their platforms’ missions. Indeed, supporting free expression online is a worthy goal for platforms to voluntarily adopt. But the reality is that in practice they fall short of their stated goal.

The same leaks that revealed coercive government interference in platforms’ content moderation decisions also revealed that platforms haphazardly applied their own processes and policies to user speech about important political issues. Even when platform rules are neutral as written, moderation decisions are frequently opaque, arbitrary, and biased. When mistakes occur, transparent appeals processes are not always available. Additionally, platforms employ some methods of content moderation, such as throttling or “shadowbanning,” without notifying the users they affect, making these decisions unappealable by design.

Make no mistake: Even when a platform’s content moderation decisions are unwise or biased, the First Amendment protects them. Violating platforms’ rights by empowering the government to dictate content moderation decisions is a cure far worse than the disease.

Government requirements that social media companies impose speech restrictions they would not elect for themselves are bad, regardless of whether the United States or another country imposes them.

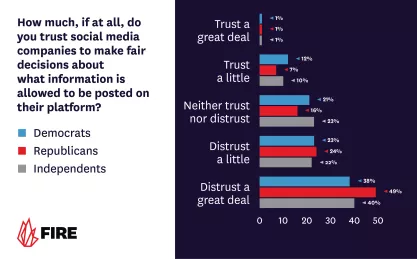

Nevertheless, haphazard and biased moderation decisions come at the cost of public confidence in platforms. New survey research from FIRE and Ipsos (Appendix 2) shows that only 19% of Americans believe social media has had a positive impact on society. While only 21% of Americans agreed with the assertion that “content posted on social media should be less regulated,” it is unclear who the 47% who disagree trust to do the job. Indeed, 64% of Americans do not trust social media companies to fairly decide what to allow on the platforms, and the same percentage of Americans do not trust the government to do so.

In short, public discourse vital to our democracy increasingly occurs on platforms that Americans, by and large, do not trust.

To address this problem, FIRE proposes three principles that are, in our view, essential to putting theoretical commitments to free speech into practice — one that lawmakers should enact and two that any social media company that wishes to promote free expression should voluntarily adopt:

- The law should require transparency whenever the government involves itself in social media moderation decisions.

- Content moderation policies should be transparent to users, who should be able to appeal moderation decisions that affect them.

- Content moderation decisions should be unbiased and should consistently apply the criteria that a platform’s terms of service establish.

Our polling suggests that the public feels strongly about these issues. Seventy-eight percent of respondents believe it is important that social media companies remain unbiased when making content moderation decisions. Seventy-seven percent believe it is important for social media companies to be fully transparent about any government involvement in content moderation decisions. Seventy-one percent believe it is important that social media companies have an appeal process for any content moderation decision.

In this report, FIRE explains these principles, highlights concerning incidents that illustrate their importance, and presents original public opinion polling on Americans’ feelings toward related content moderation issues on social media. We also present a model legislative bill that demands government transparency when agents of the executive branch request that social media companies remove, demonetize, or limit the reach of content.

Introduction: FIRE’s advocacy on social media

The Foundation for Individual Rights and Expression’s first report on social media was published in 2020, when we were still the Foundation for Individual Rights in Education.

In our report “No Comment: Public Universities’ Social Media Use and the First Amendment,” we reviewed how more than 200 state schools chose to limit discussion on their social media accounts. We found that more than 3 in 4 used Facebook’s profanity filters: secret blacklists of words Facebook does not disclose to the public. (Facebook’s parent company, Meta, has donated to FIRE in the past.)

No Comment: Public Universities' Social Media Use and the First Amendment

FIRE surveyed 200 public universities about how they filter or block content and users on their official Facebook and Twitter accounts.

Nearly a third of the schools went beyond that, adding their own customized blacklist of words. Collectively, they banned more than 1,800 terms in various places for various reasons. At the University of North Carolina, Chapel Hill, that list included “Silent Sam,” the name of a controversial Confederate monument later removed from campus. Texas A&M University’s blacklist included references to its rival University of Texas at Austin’s “hook em” gesture. At the University of Kentucky, a kerfuffle between campus dining contractors and animal rights activists led to filters for “chicken” and “chickens.”

In 2022, FIRE became the Foundation for Individual Rights and Expression, and we expanded our mission to encompass free speech issues that occur off campus. This requires us to engage more frequently with issues related to social media.

Social media companies are private actors entitled to impose what rules they like regarding what they allow users to post on their site. At the same time, the largest platforms enjoy a broad market share, and their decisions have an outsized impact on our culture of free speech and our popular understanding of what people can and cannot say. FIRE strives both to protect the rights of platforms to make decisions free from government interference — a constant threat — and to encourage large platforms to voluntarily adopt policies that enable dialogue across differences rather than intensify polarization.

Section 230

47 U.S.C. § 230 — Section 230 for short — prohibits holding any provider (or user) of an interactive computer service (such as a website, app, or social media platform) liable for content posted by another provider or user.

Section 230 made it economically possible for the internet to grow into what it is today. If social media companies had to read every message to find potentially unlawful material, they would probably need to hire as many lawyers as they have users. The law protects online services from suffering punishment or liability both for hosting content they did not create and for taking down content they do not wish to publish. Section 230 has become a convenient target of those who object to various types of online content and of those whose content the service provider deletes, blocks, or deprioritizes.

Why repealing or weakening Section 230 is a very bad idea

Section 230 made the internet fertile ground for speech, creativity, and innovation, supporting the formation and growth of diverse online communities and platforms. Repealing or weakening Section 230 would jeopardize all of that.

FIRE believes Section 230 is more important to free expression than ever. Attempts to erode or put substantial conditions on its protection will only create barriers to entry for new platforms. These barriers would be utterly insurmountable today, when there are billions of posts daily.

Without Section 230, the best-case scenario would be a world with only the largest of the social media platforms we currently have, because the only actors that could possibly pre-screen content at that scale are existing, enormously resourced platforms.

The worst-case scenario would be that massive potential liability necessitates platforms to pre-screen everything they host and to reject large amounts of content as being beyond their tolerance for risk, leading to a world where those large platforms publish far less content.

Both the best and worst-case scenarios would be bad for free expression online.

There are existing laws to address the ills created by unlawful content online. Making web providers liable will not stop those ills. It will, however, threaten all the good social media does and can do in the future.

State-mandated moderation practices

In 2021, the Florida and Texas legislatures passed laws that impose unprecedented state control over social media moderation. Florida’s law prohibits platforms from moderating speech from or about political candidates and from “censor[ing], deplatform[ing], or shadow ban[ning] a journalistic enterprise,” while Texas prohibits viewpoint-based moderation altogether.

The U.S. Court of Appeals for the Eleventh Circuit blocked enforcement of key parts of Florida’s law, correctly observing that “the most basic [principle] of the basic—is that ‘[t]he Free Speech Clause of the First Amendment constrains governmental actors and protects private actors.’” The Fifth Circuit, however, erroneously concluded that platforms’ editorial choices in moderating content were “conduct” rather than speech, and upheld the Texas law.

Both laws are before the Supreme Court in NetChoice v. Paxton and Moody v. NetChoice. FIRE submitted an amicus curiae brief in the two cases, in which we argue:

[Florida and Texas] passed laws that placed those decisions under state supervision, forgetting “the concept that government may restrict the speech of some elements of our society in order to enhance the relative voice of others is wholly foreign to the First Amendment.” [...] To paraphrase P.J. O’Rourke, giving state legislatures such power over social media platforms “is like giving whiskey and car keys to teenage boys.”

Because social media companies are private actors with First Amendment rights, the government cannot dictate what these companies must say, or not say, or whose voices they must amplify or suppress. Granting the government the power to intervene in moderation decisions would only subject private speakers to the will of the local ruling party. Far from reducing polarization, this would drive it. These laws are government interference with expression — exactly the threat the First Amendment is meant to prevent, and we will be watching Moody and Paxton with great interest.

LAWSUIT: New York can’t target protected online speech by calling it ‘hateful conduct’

Today, the Foundation for Individual Rights and Expression sued New York Attorney General Letitia James, challenging a new state law that forces websites and apps to address online speech that someone, somewhere finds humiliating or vilifying.

Florida and Texas are not the only states that have interfered with the editorial rights of social media platforms.

In December 2022, FIRE challenged a New York state law that purports to regulate online “hateful conduct” — i.e., speech — by requiring platforms to publicly explain how they handle posts that “vilify, humiliate, or incite violence” with respect to groups based on race, color, religion, or other protected categories. The law also obligates platforms to allow for and respond to reports of such posts. The U.S. District Court for the Southern District of New York issued a preliminary injunction in Feb. 2023. As of this writing, the case is before the U.S. Court of Appeals for the Second Circuit.

The fact that platforms have a First Amendment right to engage in content moderation doesn’t mean social media moderation decisions aren’t sometimes unfair, arbitrary, or politically biased. It only means we cannot solve inconsistencies or ideological imbalances of power on social media platforms by government action. To attempt to do so would end free expression to “save” it.

Using private accounts for public business

In FIRE’s view, when government actors use personal social media accounts for government business and allow others to contribute and communicate in the replies, they maintain a public forum where viewpoint discrimination (e.g., through blocking or removing replies) is constitutionally prohibited.

In 2023, the Supreme Court took up the issue in a pair of cases, Lindke v. Freed and O’Connor-Ratcliffe v. Garnier, addressing exactly when public officials’ use of personal social media accounts constitutes state action. FIRE filed amicus curiae (“friend of the court”) briefs in both cases.

The Supreme Court, in a unanimous decision in Lindke, set forth a new test for determining when a government official’s use of social media is state action. The Court’s standard, which aligns with FIRE’s amicus advocacy, establishes that a public official’s use of a social media account constitutes state action when the official 1) has authority to speak on the government’s behalf, and 2) purports to exercise that authority while posting on social media.

Importantly, that means an official can’t get away with censoring critics simply by carrying out official business on a “personal” account. As the Court observed, “the distinction between private conduct and state action turns on substance, not labels.” The Court recognized that a “close look is definitely necessary in the context of a public official using social media.” This is because “[m]any use social media for personal communication, official communication, or both—and the line between the two is often blurred.”

As we advocated, and as the Court has now reaffirmed, “the state-action doctrine demands a fact-intensive inquiry.” Simply asserting that an account is for personal use is not enough.

How do we make the culture of free expression work online?

While our analysis has so far focused on the law, FIRE’s ambit extends beyond the law, and our mandate includes promoting a culture that values free speech — and that includes in the online world.

In the academic world, we have long rated and ranked policies at colleges, encouraging them to adopt more speech-protective rules. Some have called on us to do the same with social media policies. We considered that briefly, until we looked at them and realized they are all equally bad by any standard that matters. A rating that gives everyone a failing score offers little incentive to change.

FIRE's Spotlight Database

FIRE’s Spotlight Database rates policies that regulate student expression at over 486 colleges and universities.

Additionally, when ranking school policies, our North Star is the First Amendment and how speech is treated on a public campus. We hold private schools that promise free expression to a similar standard. But private schools that do not promise free expression are intentionally not ranked. None of the large social media platforms have explicit policies that guarantee the kind of free expression a student would enjoy on a public campus, but many of them — including Elon Musk and Mark Zuckerberg — do talk a big game informally about the importance of free expression to their vision for their platforms.

In that vein we looked to FIRE President and CEO Greg Lukianoff’s open letter to Elon Musk upon Musk’s purchase of Twitter, which laid out advice for how one would go about applying principles of free speech on social media platforms that frame themselves as analogs to the public square.

The letter called on Musk to “blaze a new trail, aspiring toward a positive vision of a freer and more constructive public conversation.” Greg’s advice went unheeded, and FIRE has, on numerous occasions since, criticized the chasm between Musk’s rhetoric surrounding free speech and his anti-speech actions.

But ultimately, the real question is: How do we encourage platforms to engage in moderation practices that allow people to talk across differences, learn from each other, de-radicalize the extremes, and find common ground with the people they believe are enemies?

Who are these principles for?

Principle 1 involves government intervention in speech. It therefore applies only in cases where the government involves itself in decisions over content.

Principles 2 and 3, like Greg’s letter to Elon Musk, are aspirational. Platforms that seek to promote free expression would be wise to adopt them — though we would oppose any government attempt to impose such principles on an unwilling private social media platform.

These platforms, though large and of great influence, are not monopolies. They will rise and fall with the market.

After all, the same right that protects a platforms’ ability to make content moderation decisions — even those that are unfair or unwise — protects any group of individuals who come together to form communities around shared hobbies, interests, sports teams, or political beliefs.

Principles 2 and 3 share similarities with the Santa Clara Principles, which were convened by the Electronic Frontier Foundation in partnership with a number of civil society organizations. A number of major tech companies including Apple, Meta, Reddit, and Twitter have endorsed the Santa Clara Principles. The Santa Clara Principles were approached through a broad lens of human rights and due process, whereas we approached our principles through the lens of what would improve free speech culture. Notably, we reached similar conclusions.

Principle 1: The law should require transparency whenever the government involves itself in social media moderation decisions

Internal documents from Twitter and Facebook raised awareness of a pernicious threat to free speech known as “jawboning” — defined by Will Duffield to mean government actors “bullying, threatening, and cajoling” private actors into suppressing speech that the government could not legally suppress on its own.

What is jawboning? And does it violate the First Amendment?

Indirect government censorship is still government censorship — and it must be stopped.

When the government compels a private social media company to censor, it violates not only the platform’s First Amendment right to determine what speech it will publish, but also the First Amendment rights of users to speak and receive information through the platform.

FIRE has many times objected to jawboning, including in amicus curiae briefs in the 2024 Supreme Court cases NRA v. Vullo and Murthy v. Missouri. With this report, FIRE presents a solution in the form of a model bill that would require the government to disclose any communication where a federal agency employee contacts a social media platform regarding protected speech. Making these communications transparent will deter government officials from pressuring platforms to censor protected speech and allow the public to spot instances when jawboning crosses the line into unconstitutional coercion.

The American public is concerned about this issue. Our polling found that 77% of Americans believe it is important for social media companies to “[be] fully transparent about any government involvement in content moderation decisions,” including 79% of Democrats, 75% of Republicans, and 81% of independents.

What we saw in the Twitter and Facebook Files

Following his acquisition of Twitter, Musk released to independent journalists a number of internal documents that offered insight into how Twitter handled controversies including the banning of former President Donald Trump, the Hunter Biden laptop controversy, and ongoing contacts between Twitter executives and members of the U.S. government.

The Twitter Files revealed extensive contact between the FBI and Twitter in the form of regular meetings and many emails to key company decision makers. The FBI warned of foreign interference in the election and flagged for Twitter’s removal posts of alleged misinformation. But, concerningly, the speech flagged for removal largely involved protected speech, including obvious jokes. The FBI even repeatedly requested the identifying information of users outside of legal channels. Though Twitter did not capitulate in all cases, the conduct described was repeated and aggressive.

The government’s intended pressure was deeply felt by Twitter executives, one of whom emailed Twitter’s head of trust and safety: “We have seen a sustained (if uncoordinated) effort by the [intelligence community] to push us to share more information and change our API policies. They are probing and pushing everywhere they can (including by whispering to congressional staff).”

The Facebook Files revealed similar efforts by a number of executive branch agencies under the Biden administration, including the White House and the Surgeon General, who repeatedly urged — and in some instances aggressively demanded — that Facebook remove protected speech including jokes and memes.

Murthy v. Missouri

In May 2022, state officials along with social media users sued the Biden administration, alleging its persistent jawboning of social media platforms violated the First Amendment.

Many of the instances cited in the case amounted to coercion or excessive entanglement with the platforms’ decision-making. As intimidating as it would be to be on the receiving end of such demands from the White House and law enforcement, this behind-the-scenes pressure was accompanied by public threats of regulation including statements from the White House calling for the removal of Section 230 immunity.

The plaintiffs prevailed in the district court and in their appeal to the Fifth Circuit. The case is now before the Supreme Court. As stated in our amicus curiae brief, FIRE supports the decision of the Fifth Circuit indicating that the described pattern of jawboning violated the First Amendment. Further, we support the test set forth by the Fifth Circuit for determining when persuasion becomes coercion (and therefore violates the First Amendment).

Legislative options

Until the Twitter and Facebook Files were released, the American public had little-to-no knowledge that government officials were seeking to have private social media platforms remove or hide protected speech. Neither the platforms’ decisions nor the government’s involvement were able to be scrutinized by the users whose speech the platforms’ actions implicated.

We cannot assume government actors will respect Americans’ rights behind closed doors, and we cannot challenge rights violations we do not know about. Therefore, transparency in the government’s communication with social media companies is necessary even if the Supreme Court or Congress recognizes strict limits on the government’s authority to pressure social media companies to change their moderation policies.

Transparency should enable the public to know when the federal government is communicating with social media companies and what it’s communicating about. This would help prevent overreach and empower Americans to challenge it when it occurs. Similarly, understanding which social media companies government officials contact, and the existence of any agreements or working relationships between federal officials and social media companies related to content published on the platforms, would provide insight into the occasions when government coercion might occur.

There are several legislative approaches to providing transparency. We briefly discuss two options below.

Mandatory reporting by government employees

The first option is to require federal employees and contractors to publicly disclose their communications with social media platforms when the communications relate to the platform’s treatment of content.

FIRE has drafted model legislation (Appendix 1) that reflects this approach. The model bill, explained in more detail below, requires prompt, public disclosure of communications that meet the following description:

any communication, direct or indirect, from an employee or a government contractor, acting under color of law, to a covered platform for any purpose related to an action taken or not taken by a covered platform with respect to, or as a result of, content published on a covered platform, including content published by the covered platform, or a covered platform’s treatment of content published on a covered platform, including any policy, practice, guidelines, community standards, or other decision-making processes governing such content. The term “covered communication” does not include routine account management of a federal government account on a covered platform, including the removal or revision of the federal government’s content.

This definition casts a wide net in order to capture the full universe of communications that may involve coercive government demands or requests:

- The definition covers both direct and indirect communications. This covers every time the federal government talks to, texts, emails, or otherwise contacts a platform, including when government actors use third parties to convey the message.

- The definition covers employees and contractors acting “under color of law.” The phrase “under color of law” — a legal term of art — in this context means that federal employees must disclose communications to the platforms when they are speaking on behalf of the federal government, but also when they simply invoke the authority of the federal government. Federal employees, therefore, must disclose conversations when they are, or claim to be, speaking in an official capacity, but not when speaking purely in their personal capacity. This ensures appropriate transparency while preventing intrusion upon federal employees’ right to speak to the platforms in a personal capacity.

- The definition covers communications made “for any purpose.” The model bill makes clear that federal employees are required to report any communication subject to disclosure, regardless of its intended purpose. Whenever a federal employee asks about decisions like reducing or boosting visibility of particular posts, or decisions to demonetize or deplatform specific users, those inquiries are covered even when the reason for asking may seem benign.

- The definition covers communications related to the processes by which a platform makes content moderation decisions. For example, it would cover conversations between a federal employee and a platform about which content is and isn’t — or should or shouldn’t be — permitted according to that platform’s community standards.

The bill, however, excludes communications involving routine account management of a federal government account.

For each covered communication, a federal employee must disclose the following information:

- The name of the federal entity.

- The date on which the covered communication was conveyed.

- The name of the covered platform.

- A description or a copy of the content published on a covered platform, including any publicly disclosed names or usernames provided or referenced in the covered communication.

- A description or, if the covered communication occurred in writing, a copy of the covered communication.

- A description of the intent and rationale for the covered communication.

- A description of any agreement or working relationship, formal or informal, with a covered platform related to the covered communication.

- The statutory authority for the covered communication.

For the purposes of model legislation, the bill does not include any categorical exemptions such as the exemptions found in the Freedom of Information Act. To the extent that exemptions warrant consideration, FIRE recommends limiting them to the following FOIA exemptions:

- Matters properly classified in the interest of national defense or foreign policy in accordance with an Executive Order (Exemption (b)(1)).

- Records or information compiled for law enforcement purposes, but only for those that “could reasonably be expected to interfere with enforcement proceedings” (Exemption (b)(7)(a)), “could reasonably be expected to disclose the identity of a confidential source” and “information furnished by a confidential source” (Exemption (b)(7)(d)), or “could reasonably be expected to endanger the life or physical safety of any individual” (Exemption (b)(7)(f)).

- Matters containing or relating to “examination, operating, or condition reports prepared by, on behalf of, or for the use of an agency responsible for the regulation or supervision of financial institutions” (Exemption (b)(8)).

Absent meeting the requirements of those exemptions, disclosure should be required.

We also recommend that any exemptions last only as long as the requirements under the exemption are satisfied. For example, when the disclosure of information required under this bill no longer threatens “the life or physical safety” of an individual, the information required under this bill should be disclosed.

The model bill is also silent on the enforcement mechanism for the disclosure requirement. One option is to incorporate the existing laws governing misconduct by federal employees and contractors.

Although the obligations created by this approach may impose compliance costs on the government, they are in service of safeguarding constitutionally protected rights, and the government can limit the costs by appropriately restricting the range of employees permitted to contact social media companies in circumstances when disclosure is required.

Investigations by government oversight entities

Congress may also consider requiring studies of the federal government’s communications with social media companies by directing federal oversight offices to review those conversations and report their findings to Congress and the public. The Government Accountability Office and the Inspector General of each federal entity routinely investigate transparency matters as part of their oversight roles and are well-positioned to conduct these investigations.

To ensure full transparency, FIRE recommends that any GAO or IG report analyze all communications from federal employees to social media companies, regardless of whether these communications relate to content published on social media platforms. At minimum, the report should provide:

- An overview of the federal entities’ communications with social media platforms.

- The social media companies contacted.

- The reason, intent, or rationale for the communications.

- The existence of any agreement or working relationship related to the communication.

- Any statutory or regulatory framework in which the communications arise.

Principle 2: Content moderation policies should be transparent to users, who should be able to appeal moderation decisions that affect them

Humans are not perfect, and no system that relies on the actions of humans ever will be.

When creating any system of content moderation that prioritizes free expression, it is not enough to make the system as resilient as possible. We must also assume the system will fail and have methods of identifying and correcting those failures. To ensure that a commitment to free speech in theory is realized in practice, it is imperative to have appeal processes capable of correcting system failures.

Transparency is of paramount interest to users. Our polling indicates that 81% of Americans believe it is important for social media companies to notify users about the reason their content has been removed.

Transparency and an appeal process are critical features that judicial and mediation systems rely upon. They have been largely effective, and large platforms dedicated to free speech should allocate the resources to implement robust appeals processes and transparency. However, we must add an important qualifier: To minimize error, the processes should employ human review. We cannot completely offload content decisions to AI, however sophisticated.

Transparency has multiple benefits. First, as Justice Louis Brandeis observed over a century ago: “Publicity is justly commended as a remedy for social and industrial diseases. Sunlight is said to be the best of disinfectants.” If any moderators are tempted to act in self-interest, or to engage in viewpoint discrimination contrary to institutional policy, knowing the public will be able to see them doing so provides a strong disincentive.

Second, the public’s ability to review decisions will allow groups and individuals to ensure fair moderation of content. Some reviewers would likely be nonpartisan; others would represent specific interests. Both should be welcome to hold the moderation system to its own rules.

Third, the ability of the public to review decisions in the aggregate allows researchers to ask big-picture questions about moderation processes. Often, rules that sound reasonable in one instance turn out to suppress speech when applied over thousands. YouTube’s 2019 attempt to moderate hate speech resulted in the suppression of content by historians and journalists. That error came to light in part because journalists had large audiences on other platforms; non-journalist users would have been unlikely to see as rapid a resolution.

Outsourcing and AI content moderation

The volume of content on social media makes the cost of content review substantial. On one day in late 2022, Twitter users posted 375 million tweets; other estimates place the daily number at closer to 500 million. Keeping the cost of content moderation proportional is essential to the ongoing viability of social media. Companies have thus far primarily used two methods of doing so: outsourcing and AI.

Outsourcing

Outsourcing first-pass content moderation, as with outsourcing anything else, can indeed save money. At the federal minimum wage of $7.25 an hour, an eight-hour content moderator would cost an absolute minimum of $51. Daily minimum wage in the Philippines, for example, ranges from $6 to $10 a day. Indian content moderators have been paid roughly the same.

But lack of direct cultural context and native language fluency can decrease the reliability of moderation decisions. Subtlety, sarcasm, and cultural context are easy to miss or misunderstand, especially by non-native speakers, and have contributed to the wrongful removal of posts. Additionally, controversy-driven users flag to review old content — sometimes known as “offense archaeology” — can result in bans based on shifting cultural tastes. These reviews are both unfair to the users and unsustainable for platforms, which will find the task exponentially more difficult with every year they exist.

Among the biggest problems with outsourcing is the toll it takes on the reviewers themselves. A nonstop stream of hateful, abusive, violent content, and even illegal content such as child pornography, is unlikely to produce optimal health outcomes in anyone, and some moderators have sued based on the psychological toll the job takes. Whether, and how, the job of content moderation could be made less harmful to human reviewers is unclear, but if the fundamental process of content review is harmful to the people who engage in it, upscaling it might create as many problems as it solves.

AI Moderation

AI moderation seems like the natural replacement. Facebook now boasts that its artificial intelligence systems flag 90% of content the platform eventually acts on before any humans report it. Some estimates put the cost savings of AI moderation at up to 90% over human moderators. AI cannot be psychologically wounded; and its decision-making is, if nothing else, theoretically reflective of one consistent set of biases: those of the programmers and training dataset. That consistency makes AI much easier to audit at scale, and — in theory — much easier to correct at scale.

But AI is not, and will never be, human. AI will inevitably make mistakes humans would easily spot, particularly where context is crucial. Human moderators may face limits in their ability to understand cross-cultural context; AI is, in most present iterations, utterly incapable of understanding cross-cultural context. And social media is where culture develops at a rapid pace, meaning moderators are tasked not only with understanding culture, but also, to some degree, anticipating it. Given AI is trained on content from the past, it will necessarily be a step behind the very latest cultural developments.

For these reasons, any platform valuing broad and free expression must make humans integral to content moderation and appeal processes. How well platforms manage to integrate the tools of human moderation and AI moderation will largely determine the success of these processes going forward.

Transparency and the availability of an appeal process are consistent with either method, and will provide crucial information for auditing those decisions. Better data will both refine AI models and highlight where those models cannot substitute for human judgment. Without transparency and an appeal process, on the other hand, moderation will remain a “black box” where no one understands why decisions are made, and where no one takes accountability for them.

The problem of deboosting and shadowbanning

“Shadowbanning” originally referred to the practice of banning a user without their knowledge. From the shadowbanned user’s end, they are able to post as normal, but other users cannot see or interact with their posts. On Reddit, this is a feature. And YouTube permits channel owners to “hide” users in their comment sections, so only the commenter sees their comment.

The intended advantage of a shadowban over a standard ban is that a normal ban for aggressive or threatening activity would only further inflame that user; the shadowban lets them vent and wonder why no one engages with their vitriol. Additionally, it may prevent actions by the user to circumvent a regular ban, such as creating a new account under an alias.

Free Speech and Social Media

Social media companies wield immense power to shape and inform important national debate. But some have gone too far in regulating the speech of their users. Here's what you need to know.

Eventually, the meaning of “shadowban” evolved. Now, when most people talk about shadowbanning, they’re talking about “algorithmic deboosting,” where the algorithm that determines what posts show up in users’ feeds suppresses that content while still making the content visible on the poster’s page. X, for example, deboosts content that will take users off-site. In 2018, when X was still Twitter, the platform relied on the confusion between the two definitions to hand-wave away criticism that it engaged in algorithmic deboosting.

Documents released after Musk’s purchase suggest X — then Twitter — did engage in algorithmic deboosting of ideas on disputed, politically charged topics. Whether that constitutes a ban based on political viewpoints is for you to decide.

Because it’s impossible for anyone except the algorithm’s designers to know how much engagement a post would generate absent deboosting, users can only speculate whether they were shadowbanned. An algorithmic shadowban is, theoretically, almost as effective as a ban, because users would have to go out of their way to see an author’s post, and only the most determined would do so. In some ways, it might even be worse than a ban. An author who knows they’re banned has the option of trying to intentionally migrate their community to another platform right away. An author who doesn’t know they are shadowbanned may not ever know why their audience dwindled.

Because shadowbans are secret by nature, unaware users cannot invoke any appeal process that might exist. Shadowbans are unlikely to change users’ behaviors because they do not let the user know that they have been penalized, much less provide feedback on the behaviors that caused the user to incur the penalty. This is a bad outcome for platforms committed to freedom of expression. Platforms should work to achieve their goals with more transparency.

This is a difficult problem to “solve” because platforms have a strong and legitimate countervailing interest in obscuring their algorithms to prevent 1) competitors from adopting their approach and 2) users tailoring their content to the algorithm to artificially boost their reach.

Nevertheless, some approaches are better at respecting transparency and user agency than others. Musk’s announcement that X would deprioritize specific content seems straightforwardly better than just deprioritizing content without the announcement. It allows users to change their behavior to avoid deboosting while achieving X’s aim of reducing that content in users’ feeds. Additionally, X’s choice to make its recommendation algorithm open-source provides substantial feedback to users on what content it will reward (though not all elements of the recommendation system are transparent to users).

Transparency and procedural fairness

In our survey, 81% of respondents said it is important that users receive notice when a platform removes content and receive an explanation for why it did so. This is unsurprising. People generally view a process more favorably if they understand what triggers it and how it operates. In fact, an inconsistent procedure can cause more dissatisfaction than inconsistent outcomes.

People generally are more likely to deem a process fair when they’ve had the chance to express their views — especially before a process takes place

Procedural fairness is an important element of transparency. For content removal, procedural fairness means users understand the platform’s rules; how the identified content has broken the rules; how to appeal that decision; the effect and duration of any sanction resulting from the process; and, if appealed, an explanation for the appeal’s decision. How the appellate function records findings and makes them available to users and the public is also important to transparency.

Platforms that suppress user content for reasons ranging from avoiding health misinformation to restraining “hate speech” should be willing to stand behind those reasons and defend their application of policies based on those reasons. While some users will no doubt still be antagonized, many others would forgive disagreements (particularly after an appeal).

Platforms could do even more to underscore a commitment to procedural fairness by giving users an opportunity to provide input on the process. People generally are more likely to deem a process fair when they’ve had the chance to express their views — especially before a process takes place, but even afterward. A platform could aggregate these responses and weigh them against its policies, and if it finds them dramatically misaligned with user expectations, either the policies merit additional review, or the community would benefit from additional explanation.

Meta’s oversight board

Meta, which owns Facebook and Instagram, has the most robust appeals policy of any social media company. It allows people to appeal Facebook content removals (other than for certain safety-related categories), with an oversight board populated by independent experts — including experts in the area of free expression — who decide the most important appeals.

But the oversight board has shortcomings. It does not set company policy, but rather can only decide cases under that policy. While the oversight board overturns Meta’s decision in the majority of its cases, it takes on very few cases overall — in 2021, it overturned Meta on 16 of 20 cases, and in 2022 it overturned Meta on 9 of 12 cases. Of the 265 policy recommendations the oversight board has cited at the time of this writing, 75 have been partially or completely implemented. Most relevant to the issue of transparency, oversight board decisions affect only content removals, not limitations of reach and “shadowbannings.”

Principle 3: Content moderation decisions should be unbiased and should consistently apply the criteria that a platform’s terms of service establish.

Platforms have the First Amendment right to organize around whatever principles they choose, including principles that inherently bias or privilege one group or viewpoint. However, if platforms want to promote free speech — as platforms like Facebook and X have indicated is core to their missions — they should apply their rules consistently, predictably, and without double standards or favoritism.

For example, if a platform’s terms of service bans harassment, a platform that promotes free speech should not adopt an overly broad definition of that term or apply the policy differently based on the viewpoint or identity of the person who is harassing or being harassed. In general, platforms that seek to promote free speech should strive to make moderation decisions as free of personal and political bias as possible.

In the case of moderating political speech, any platform that seeks to promote free expression should develop narrow, well-defined, and consistently enforceable rules to minimize the kind of subjectivity that leads to arbitrary and unfair enforcement practices that reduce users’ confidence both in platforms and in the state of free expression online.

Our survey shows that those who believe social media companies are politically biased outnumber those who don’t by more than three to one. Contributing to this perception are a number of public incidents when platforms made moderation decisions that appeared political and/or at odds with their own policies. In some cases, we learned about these incidents only through leaked documents or court disclosures of moderation decisions that appeared to be based on internal or external political pressure. In other cases, the decisions are best understood to reflect decision makers’ personal whims.

User perceptions

Our survey found that a substantial percentage of Americans believe that social media companies are politically biased, with the plurality of those who believe platforms are biased believing that bias cuts against conservatives.

Thirty-nine percent of respondents believe social media companies are biased for or against liberals or conservatives, versus 12% who said they aren’t and 48% who said they don’t know. Separating by political affiliation those who believe social media companies are biased, 54% of Democrats said platforms are “somewhat” (37%) or “very” (17%) biased against liberals, while 93% of Republicans said they are “somewhat” (24%) or “very” (69%) biased against conservatives.

Of those who believe the companies are biased, Democrats were more likely than Republicans to see the bias as cross-partisan, with 38% of those Democrats saying platforms are “somewhat” (34%) or “very” (4%) biased against conservatives, while only 5% of Republicans saying platforms are “somewhat” (4%) or “very” (1%) biased against liberals.

‘Hate speech’ and ‘censorship envy’

FIRE and other American free speech organizations and advocates have commented often and at length on the perils of policing “hate speech,” an inherently amorphous and subjective category.

Hate speech laws backfire: Part 3 of answers to bad arguments against free speech from Nadine Strossen and Greg Lukianoff

Nevertheless, nearly every major social media platform has a policy against hate speech. Fairness here would, at the very least, demand that platforms that claim to value free expression treat groups alike, and not allow “hate speech” for one perspective that would be disallowed from another.

Perceived unfairness in content moderation decisions leads to “censorship envy.” The coiner of the term, First Amendment scholar (and FIRE plaintiff in the above-mentioned Volokh v. James case) Eugene Volokh, defined it as the “common reaction that, ‘If my neighbor gets to ban speech he reviles, why shouldn’t I get to do the same?’” The unfairness of platforms’ decisions is a point of focus for many activists, who are eager to point out that their opponents receive more favorable treatment. Rather than demand free speech for all, the activists demand censorship in kind. This tendency expands the circle of proscribed speech over time.

Political bias

Even when the stakes are low, confirmation bias makes us suspicious of speech with which we disagree.

When access to social media platforms can allow a message to reach hundreds of millions of people, the stakes can feel extremely high, both to the speaker and to those in a position to make moderation decisions. The incentive and temptation to use that moderation power to achieve a favorable political outcome can be enormous.

Rapidly evolving situations with significant political ramifications have the greatest opportunity to damage public trust in platforms and, in doing so, damage the environment for expression.

No individual person can be truly objective and unbiased. This does not mean objectivity and fairness are shallow values: It means striving for them should come with the knowledge that fully attaining them is not possible. The value is in the striving — in this case, in fairness both in procedures (as discussed in Principle 2) and in moderation decision-making.

In the case of moderating political speech, any platform that seeks to promote free expression should develop narrow, well-defined, and consistently enforceable rules to minimize the kind of subjectivity that leads to arbitrary and unfair enforcement practices that reduce users’ confidence both in platforms and in the state of free expression online.

Beware the heat of the moment

When natural human bias combines with topics of grave political importance, sudden news events, and demands for quick action, social media platforms have all the ingredients for making decisions they will later come to regret.

Perhaps the most-discussed example of such a decision is the Hunter Biden laptop story. In short, on October 14, 2020, several weeks before the U.S. presidential election, the New York Post published a story about the contents of a laptop allegedly once belonging to then-candidate Joe Biden’s son, Hunter. The Post received the laptop, which contained information about drug use and sexual images of Hunter, from Rudy Giuliani, who almost certainly turned over to the media to embarrass Hunter, and by extension his father, with the intent to impact the upcoming election.

Facebook and Twitter blocked their users from sharing the article on their platforms. Facebook founder Mark Zuckerberg claimed in 2022 that Facebook did so because the FBI had warned the platform of an impending campaign of Russian misinformation, and Facebook staff thought this story “fit the pattern.”

Both Zuckerberg and then-Twitter CEO Jack Dorsey went on to express regret for their respective platforms’ decision to take down the story. At that point, the damage was done.

Illustrating the Streisand Effect, the decision by the platforms, though brief, backfired. The laptop story became a staple of the culture wars, cited by many — including during heated questioning of social media executives by Congress — as proof positive that platforms favor liberal viewpoints. We will never know with certainty if it was political bias and fear of damaging the Biden re-election campaign that drove the initial hasty decision to block the article, but there’s little doubt it damaged the perception that the platforms are politically fair. In fact, the story motivated Florida to pass its law prohibiting social media companies from taking “any action to censor, deplatform, or shadow ban a journalistic enterprise based on the content of its publication or broadcast” that the Supreme Court is currently considering in Moody v. NetChoice.

Allegations of double-standards on social media haven’t been confined to one side of the political aisle. The Electronic Frontier Foundation has argued that policies against sexually explicit content have been enforced with bias against non-sexual LGBT content. Claims have been made alleging Meta’s enforcement practices are biased against pro-Palestinian content. Musk stated that he would ban certain pro-Palestinian slogans including the word “decolonization” and “from the river to the sea,” and on a number of occasions, he has suspended high-profile liberal and left commentators.

Twitter is no free speech haven under Elon Musk

For all the talk of Twitter being a digital town square, it is ultimately more like Elon Musk’s house party.

As alluded to above, despite Musk’s frequent appeals to principles of free speech, X post-acquisition has been characterized by rapid and sometimes short-lived changes to speech policies, for which he has earned a number of rebukes from FIRE. Rapid policy changes, combined with the embrace of non-transparent deboosting, leaves X users little ability to know what is acceptable to post on the platform, its open-source content algorithm notwithstanding.

Choices not to apply the rules can demonstrate bias as well. When Russia invaded Ukraine in 2022, Facebook and Instagram made temporary changes to their rules to allow Ukrainians to call for the deaths of Russians, which would normally violate its policies on hate speech. Principles held only when they are convenient are not principles at all, and a temporary change like this reveals that any principle underlying the hate speech policy is negotiable to those in charge, if the principle can be said to exist at all.

Rapidly evolving situations with significant political ramifications have the greatest opportunity to damage public trust in platforms and, in doing so, damage the environment for expression. Fairness and transparency are most important in these situations.

‘Newsworthiness’ and inherently subjective standards

The desire to treat similar cases differently may lead platforms to employ (or create) subjective or discretionary standards that can plausibly lead to almost any outcome they prefer.

For example, X and Facebook have policies that allow them to keep up posts that violate their rules if those posts are deemed “newsworthy” (in Facebook’s case) or “in the public interest.”

By design, discretionary newsworthiness policies privilege certain kinds of speech or certain speakers. If a platform seeks to promote free speech, it does not hold well to that aspiration if the most informed users cannot reasonably predict what speech the platform will allow.

Some may argue that without these discretionary standards, additional content removals become necessary. That may be true in a narrow sense, but a system that enshrines free speech for some but not others is not one that truly supports free speech. In this case, platforms should either afford a greater allowance for this type of speech, regardless of who it comes from, or they should clearly define “newsworthiness” (or any other subjective standard), so it can’t plausibly apply to any post for which a platform wants to bend the rules.

Perceived bias incentivizes ‘working the refs’

To the extent people and organizations — especially government actors — lobby platforms to ban speech the platforms would otherwise not be inclined to ban, platforms should hold firm and make clear they will not deviate from their policies. When the rules are the rules, and they apply consistently, there’s little for which to lobby.

Conversely, when rules are flexible and subject to politicking, and/or when platforms set a precedent that they often and frequently change policy in response to live political controversies, they invite efforts to “work the refs,” making their own jobs more difficult. Applying rules consistently and fairly creates less surface to attack, reducing the incidence of lobbying efforts that cite real or perceived double standards.

Threats to the culture of free speech, group polarization, and other consequences

To the extent platforms moderate content to reduce toxicity and polarization and to retain or increase usership, their efforts may have the opposite effect. This is especially true when content moderation decisions are perceived as unfair.

If users believe their “side” is censored unfairly, many will leave that platform for one where they believe they’ll have more of a fair shake. Because the exodus is ideological in nature, it will drive banned users to new platforms where they are exposed to fewer competing ideas, leading to “group polarization,” the well-documented phenomenon that like-minded groups become more extreme over time. Structures on all social media platforms contribute to polarization, but the homogeneity of alternative platforms turbocharges it.

This is simply the marketplace of ideas — and the literal market — at work. Like it or not, partisan media — both traditional and social — respond to market demand through competition and innovation. We would not be better off in a world where competing platforms cannot emerge. But if platforms wish to keep users from leaving their platforms for others and to lower the temperature of polarization that has suffused the discourse broadly, conspicuous fairness would improve their situation.

What platforms committed to free expression actually should do in service of that commitment, and a commitment to civil public discourse, matters greatly. If citizens continue making online platforms central to their speech and political engagement, and they see and face broad and arbitrary censorship, they may internalize that result as indicative of the illusory nature of free speech. It’s not hard to imagine those raised on social media taking their learned expectations of arbitrary content removals or diminutions from the walled gardens of social media into the real world, tolerating and even demanding greater censorship. That result is inconsistent with the free speech culture we need to reap the benefits of our free speech law — and our free speech law will not survive long in a culture that does not value free speech.

Looking forward: Promising and concerning social media developments on the horizon

Given social media’s ubiquity, it can be easy to forget just how new it is.

While precursors to modern social media such as Usenet and Bulletin Board Systems date back more than 40 years, Facebook only celebrated 20 years in business in February. X is two years younger. Instagram is 13 years old.

Everything is in flux, from a platform’s popularity, to its features, to its speech policies. Any confident prediction of what social media will look like 10 years in the future is sure to be wrong.

With that in mind, we want to cautiously speculate on future free speech developments involving social media beyond the scope of our three principles. Some of these, we find promising; others, we find concerning.

Promising: Growing support for alternatives to content moderation, X’s Community Notes

As a free speech organization, FIRE believes the best answer to bad speech is more speech.

An important component of a healthy free speech culture is a strong preference for counterspeech over the removal of speech, even in situations where removal is totally within the rights of the person/entity doing it.

A growing number of writers and experts have expressed skepticism that content moderation on social media will solve the many extremely complex social problems it seeks to answer: namely, misinformation, polarization, public health, and civil discourse. Certainly there is space for innovation in approaches to these problems on social media. X’s Community Notes may be just such an innovative “more speech” approach to the problem of misinformation.

As FIRE has explained before, regulating misinformation is a fraught endeavor. While social media companies have the right to combat misinformation on their platforms, they should understand that some solutions are worse than others for free speech. Two fundamental questions around how one approaches misinformation on social media are, “Who decides what is misinformation?” and, “What happens when something is determined to be misinformation?”

“Who decides” is important. If the fact checker is perceived as biased, users will be unlikely to believe a fact-check on something they’re inclined to believe. Affording fact-checking power to any government would be an enormous and dangerous mistake. What’s more, if, as shown in our polling, users do not trust the platforms themselves, users will likely not believe the platforms’ fact determinations. Likely in recognition of these two realities (and likely with some desire to pass the buck), large platforms have typically outsourced fact-checking to third party groups. Mark Zuckerberg famously said he didn’t want Facebook to be the “arbiter of truth.” But, in effect, through choosing a third-party fact checker, Facebook becomes the arbiter of the arbiter of truth. Given that users do not trust social media platforms, this is unlikely to engender trust in the accuracy of fact checks.

As far as “what happens,” a number of approaches currently exist: either users or platform automation may flag a post, and the platform may react by deboosting or deleting it. Or, sometimes, the platform may use the “more speech” option of labeling, the act of affixing a label to posts deemed misinformation in order to provide corrective context.

Assuming a post is actually misinformation, a labeling approach may persuade those who already believe the misinformation that it is, in fact, false or misleading. This gives the labeling approach an advantage over the practice of removing the post entirely. It represents greater confidence in users to come to correct conclusions when offered more information, while removal assumes they cannot be trusted to distinguish truth from falsehood.

Government attempts to label speech misinformation, disinformation, and malinformation are a free-speech nightmare

Allowing the government to decide what speech is and is not fit for public consideration will likely make the problem worse.

X’s system for addressing misinformation in posts, Community Notes, offers an innovative solution to both the “Who decides what is misinformation?” and “What happens when something is determined to be misinformation?” questions. For “who decides,” it’s right there in the title: community users. For “what happens,” they flag posts they believe are misleading or require more context, and propose a “Note” to add context. Users sign up to contribute to Notes, which means they not only write Notes but also rate — as helpful or not — the Notes written by other contributors.

As an attempt to check bias, only Notes that receive approval from contributors with diverse perspectives (as determined by which Notes the contributor has rated as helpful in the past) are presented to X users at large. Any X user can then rate the Notes. Users whose posts have Notes appended to them are able to appeal the Notes for additional review.

As far as transparency, all Notes published are downloadable in bulk every day, and the algorithm is open source for anyone to scrutinize. Because Community Notes are crowdsourced and subject to approval from people with diverse perspectives, they may be less vulnerable to bias and easier for users to trust than top-down solutions that may reflect the biases of a much smaller number of stakeholders.

While the Community Notes feature is not a perfect solution — those never exist — it represents an innovative way to grapple with the real issues plaguing top-down fact-checking methods.

We hope additional innovative alternatives to content moderation appear in the future.

Promising: ‘Protocols not platforms’ and middleware could offer more options and control to users

Currently on large social media platforms, users have relatively little control over the workings of the algorithms that choose what content they see or the content moderation policies to which they are subject.

Users who do not like the algorithm or the policies of a specific platform always have the option to leave, but various sources of friction weigh against doing so — whether it’s a decade of photos posted to Instagram or hundreds of friends remaining on Facebook who are unlikely to collectively move to a new platform. Network effects tend to concentrate users on a small number of platforms, reducing the number of user options. And if no existing platform offers speech policies or content algorithms users want, they’re left with the choice to create their own platform (a major undertaking, but one that’s becoming more feasible, as we will discuss).

In the current landscape, a handful of decision-makers at a handful of companies effectively decide the nature of the policies that govern the vast majority of online speech. Several proposals have arisen to improve this state of affairs and offer users greater choice without resorting to unconstitutional government coercion.

In an influential paper for the Knight Institute called “Protocols Not Platforms,” Mike Masnick proposed a vision of social media that operates via protocols. Social media protocols would be a kind of unified language for posting and receiving posts not tied to any one platform. This is similar to how Gmail, Protonmail, and Outlook intercommunicate, allowing users to choose which program to use without locking themselves out of the option of communicating with users on different programs. Social media users would similarly have their choice of interface and, more importantly, would have greater control over the content they receive. Just as you can set up personalized filters for email, social media users on a protocol model could filter which content enters their feed.

One can imagine going through a sign-up process, viewing a list of content-moderation algorithms from different providers, and selecting the one closest to one’s preferences — or choosing to experience a fully unadulterated internet. Alternatively, a user’s experience could be tailor-made based on a virtual interview process. “Do you want to be shown diverse perspectives?” “Do you want to see politics? A lot, or a little?” “Do you want to see sexual content?” “Do you want to see slurs or cursing?”

The interoperable nature of these protocols decreases or eliminates the friction of moving between social media platforms. Changing your content algorithm would no longer require moving from, for example, X to BlueSky, it could require as little as selecting a new option.

With a “middleware” approach, even if one’s friends stay on older or more traditional platforms, it may not pose a problem. User-selected interfaces could aggregate content both from protocols and from users’ traditional social media accounts, pulling and subjecting content from those platforms to the user’s selected algorithms and filters.

The possibilities are endless. This user choice would benefit both those who want to see more content moderation and those who want to see less or none at all.

Masnick’s paper inspired Twitter to create the protocol-based social platform BlueSky. Mastodon, similarly, is a federated social media program where users can run their own platforms with their own content policies and interfaces. We may see more such programs shortly. In some ways, this could be seen as a return to the early internet days of Usenet.

These sorts of emerging platforms have a strong tendency toward being open source, with the transparency benefits that that entails.

Concerning: Deep deplatforming

When a user is deplatformed from one social media platform, they can still move to another. If a user is unlucky enough to be banned from all social media platforms or websites, they have the ability to create their own.

However, “deep deplatforming” attempts to remove people’s ability to spread a message online by targeting the infrastructure needed to run a website.

These infrastructure layers include hacking protection services, server hosting companies, internet service providers, domain name registrars, and, at the very bottom, regional internet registries. As University of Tennessee law professor Nicholas Nugent put it in a hypothetical about a deep-deplatformed user named Jane:

If no website will host a user like Jane who holds unpopular viewpoints, she can stand up her own website. If no infrastructure provider will host her website, she can vertically integrate by purchasing her own servers and hosting herself. But if she is further denied access to core infrastructural resources like domain names, IP addresses, and network access, she will hit bedrock. The public internet uses a single domain name system and a single IP address space. She cannot create alternative systems to reach her audience unless she essentially creates a new internet and persuades the world to adopt it. Nor can she realistically build her own global fiber network to make her website reachable. If she is denied resources within the core infrastructure layer, she and her viewpoints are, for all intents and purposes, exiled from the internet.

The above-described deep deplatforming is a serious threat to freedom of expression on the internet, and it’s different in principle from deplatforming where alternate platforms exist or can be created.

While many erroneously analogize social media platforms to “common carriers,” the government-created monopolies that run domain name and IP address registration at the base of the internet infrastructure ought to be considered common carriers and ought not anoint themselves the final and absolute arbiters of what users can say online. Importantly, these infrastructure monopolies are not like private social media platforms: They are not in the business of delivering curated compilations of content subject to First Amendment protection.

While efforts at “deep deplatforming” have thus far targeted some of the most controversial websites including Parler, The Daily Stormer, Kiwi Farms, and Gab, the “slippery slope tendency” leads us to believe that we will see such tactics spread to less controversial targets over time.

Concerning: International threats to free speech online

As we have stated countless times throughout this report, the United States government lacks the authority to regulate internet speech unless it falls within a narrow First Amendment exception.

However, the European Union, unimpeded by First Amendment-style prohibitions, has taken a much heavier hand regulating social media companies that do business in EU member states, most notably with the Digital Services Act. The act, which took effect earlier this year, imposes hefty fines on “very large online platforms” that do not censor certain proscribed harmful and hateful content.

As FIRE fellow Jacob Mchangama put it in the Los Angeles Times:

Removing illegal content sounds innocent enough. It’s not. “Illegal content” is defined very differently across Europe. In France, protesters have been fined for depicting President Macron as Hitler, and illegal hate speech may encompass offensive humor. Austria and Finland criminalize blasphemy, and in Victor Orban’s Hungary, certain forms of “LGBT propaganda” is [sic] banned.

The Digital Services Act will essentially oblige Big Tech to act as a privatized censor on behalf of governments — censors who will enjoy wide discretion under vague and subjective standards. Add to this the EU’s own laws banning Russian propaganda and plans to toughen EU-wide hate speech laws, and you have a wide-ranging, incoherent, multilevel censorship regime operating at scale.

While the law does not apply directly to Americans, in theory, social media companies could adopt a “least common denominator” approach and comply with the DSA worldwide. This is not difficult to imagine: Many large websites indeed responded to the EU’s privacy regulation, the General Data Protection Regulation, by complying even outside of the EU. Why? Because it’s too costly or difficult to determine who the GDPR does and does not cover.

Government requirements that social media companies impose speech restrictions they would not elect for themselves are bad, regardless of whether the United States or another country imposes them. The DSA is a good reminder that Americans are lucky to have the First Amendment’s protection.

Our sincere hope is that social media companies do not choose to impose greater restrictions on Americans’ speech on their platforms simply to avoid putting resources into determining who the DSA covers.